Due to the real-time rendering performance, 3D Gaussian Splatting (3DGS) has emerged as the leading method for radiance field reconstruction. However, its reliance on spherical harmonics for color encoding inherently limits its ability to separate diffuse and specular components, making it challenging to accurately represent complex reflections. To address this, we propose a novel enhanced Gaussian kernel that explicitly models specular effects through view-dependent opacity. Meanwhile, we introduce an error-driven compensation strategy to improve rendering quality in existing 3DGS scenes. Our method begins with 2D Gaussian initialization and then adaptively inserts and optimizes enhanced Gaussian kernels, ultimately producing an augmented radiance field. Experiments demonstrate that our method not only surpasses state-of-the-art NeRF methods in rendering performance but also achieves greater parameter efficiency.

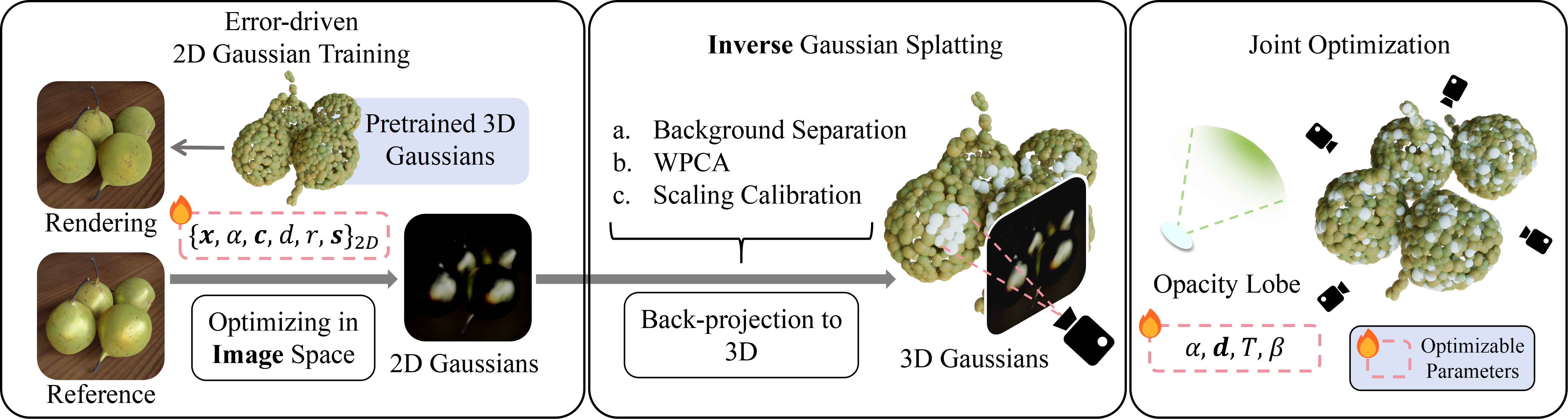

We introduce an error-driven densification strategy: we first optimize 2D Gaussians in image space to reduce residual errors (left). Then we leverage depth map information to back-project 2D Gaussians into world space, and apply clustering, WPCA, and scaling calibration to minimize projection errors. The new primitives are initialized with a view-dependent opacity lobe oriented toward the corresponding camera (middle). We then jointly optimize the augmented Gaussians with the original scene to recover challenging view-dependent color and improve photometric consistency (right). Our post‑enhancement is plug‑and‑play with existing 3DGS frameworks and achieves higher quality with fewer SH parameters, especially for complex reflections.

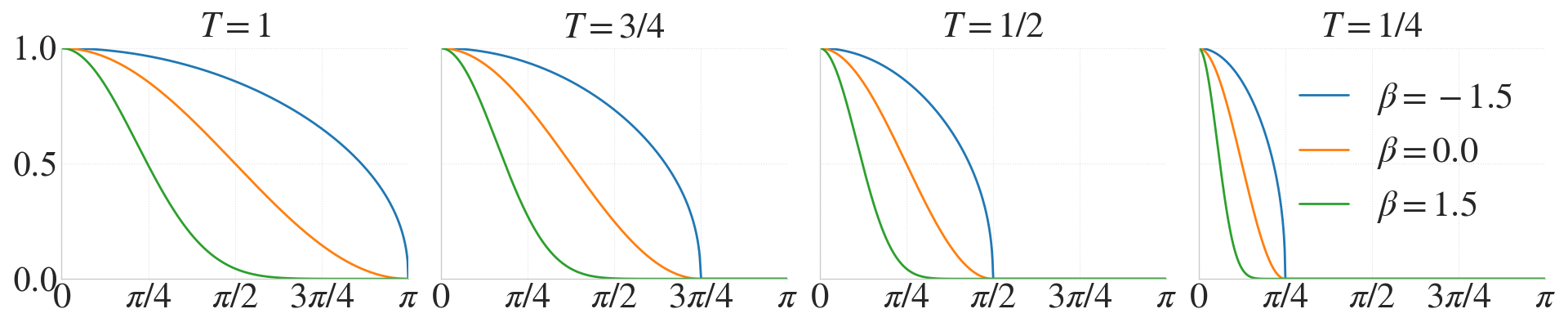

Inspired by classical Phong shading, we model view-dependent opacity with a cosine weighted function whose shape is controlled by two parameters: β, which governs the lobe’s sharpness, and T, which determines its angular extent. Along with the central orientation of the lobe, each new kernel introduces 5 learnable parameters to a standard Gaussian primitive.

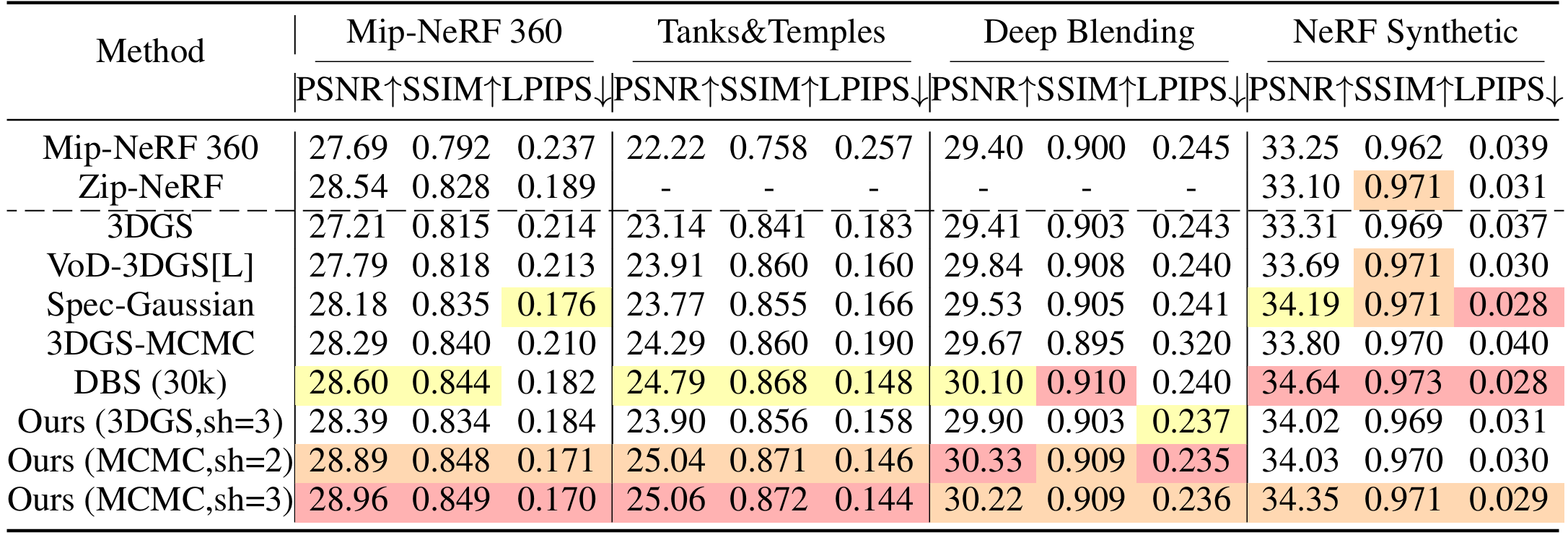

Our Gaussian supplementation strategy significantly enhances rendering quality across existing GS scenes. It is worth noting that, within the MCMC framework, although second-order SH (i.e., sh=2) reduce the parameter count by 21 per primitive compared to third-order SH (sh=3), they still achieve comparable rendering quality. Both approaches outperform the state-of-the-art implicit method [Barron et al. 2023], demonstrating that our method facilitates the adaptation of Gaussian splatting to low-end hardware platforms. Compared with [DBS Liu et al. 2025], the state-of-the-art explicit approach, our method outperforms it on real-world datasets. Compared with specular-aware methods, VoD-3DGS and Spec-Gaussian, our method achieves better results on almost all datasets.

@inproceedings{

yang2026augmented,

title={Augmented Radiance Field: A General Framework for Enhanced Gaussian Splatting},

author={Yixin Yang and Bojian Wu and Yang Zhou and Hui Huang},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

url={https://xiaoxinyyx.github.io/AuGS/}

}

This work was supported in parts by the National Key R&D Program of China (2024YFB3908500,2024YFB3908502), NSFC(U21B2023), Guangdong Basic and Applied Basic Research Foundation (2023B1515120026), and Scientific Development Funds from Shenzhen University.